Facial Recognition May Misidentify Lawful Citizens as Suspects, Say Experts

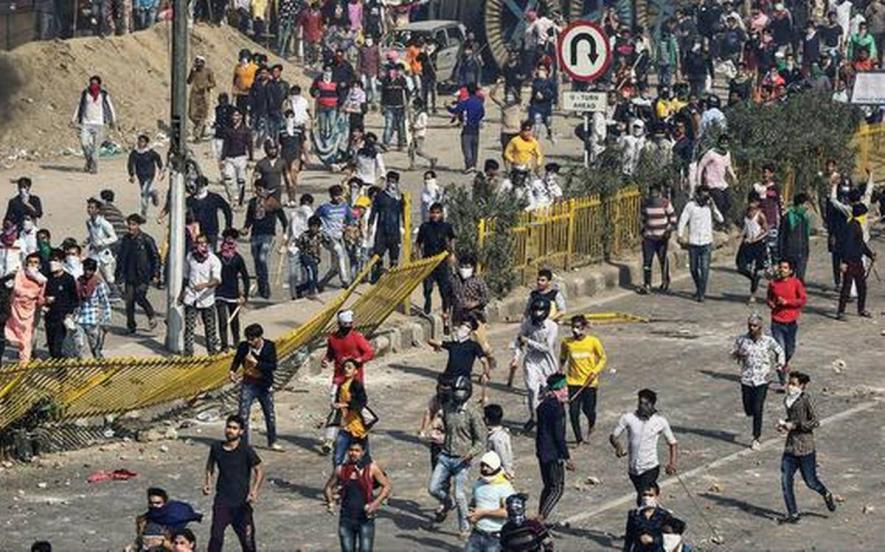

Representational image. | Image Courtesy: The Hindu

New Delhi: Union Minister for Home Affairs Amit Shah told Parliament on March 11 that security agencies have used facial recognition technology to identify rioters who created communal unrest in North-East region of the national capital from February 24-26. As many as 53 persons lost their lives and hundreds suffered injuries in the pogrom — as many call it. Properties worth crores were either turned into ashes or damaged beyond repair.

Shah, during a debate on the violence in Delhi— worst in decades — in Lok Sabha, said: “We have used using facial recognition software to initiate the process of identifying faces. We have fed voter ID data into it, we have fed driving licence and all other government data into it. More than 1,100 people have already been identified through this software.”

In a first of its kind admission, the home minister elaborated, “Over 300 people from Uttar Pradesh came in to cause riots here. The facial data that we had ordered from UP makes it clear that this was deep conspiracy.”

Responding to AIMIM (All India Majlis-e-Ittehad-ul-Muslimeen) chief Asaduddin Owaisi’s appeal that innocent people should not be dragged into this through the software, he added: “Owaisi Sahab, this is a software. It does not see faith, it does not see clothes. It only sees the face and through the face the person is caught.”

What Shah did not mention was the details of the software (whether biometrics or based on other data) or which law enforcement agency deployed it.

Meanwhile, critics and experts have raised privacy concerns and urged the government to formulate a policy or law before deploying such technology. They said the law or policy should be robust and consist of privacy impact assessments as the technology is riddled with problems and has the potential to falsely identify lawful citizens as suspects in an investigation.

The automated facial recognition (AFR) technology uses cameras to capture images of faces and double-checks these against databases of wanted suspects.

Accessing and using biometric data of an individual from his or her Aadhaar without authorisation by a court of law or in absence of a legislation — according to Internet Freedom Foundation — is in violation of the 2019 Supreme Court judgment in KS Puttaswamy Vs. Union of India.

Questioning the sophistication of the technology to identify culprits, the group said the system’s accuracy figures were alarmingly low. As late as August 2019, the system had even failed to distinguish between genders when deployed by the Delhi Police to track missing children.

Devdutta Mukhopadhyay, Associate Counsel at the digital rights organisation, said there were various problems with deployment of facial recognition technology in India.

“The accuracy of facial recognition systems will vary depending on who is the vendor, what kind of training data was used, what are the technical specifications of the system etc. The Delhi Police originally acquired its facial recognition system to identify missing children, but the system abysmally failed at this task. As admitted by the Delhi Police and Women and Child Development Ministry before the Delhi High Court, the system had an accuracy rate of 2% in 2018 which dropped to 1% in 2019 and it couldn't even distinguish between boys and girls," she said.

Mukhopadhyay further added that facial recognition technology was still developing and concerns about accuracy and discriminatory biases must be addressed before large-scale deployment of these systems.

Referring to the situation in foreign jurisdictions, she noted “In the American context, there is a significant body of empirical evidence which demonstrates that facial recognition technology is more likely to misidentify African-Americans and women. Due to these biases, many states have either banned or imposed a moratorium on the use of facial recognition technology.”

The other big concern, she said, was the absence of any legislative framework to govern the use of facial recognition technology in India.

“Not only do we lack a specific law authorising the use of facial recognition technology, we don't even have a general data protection legislation yet. Facial features are a type of biometric data, which is classified as sensitive personal data and their non-consensual use must strictly adhere to constitutional principles of necessity and proportionality” she added.

Facial recognition systems, she pointed out, would allow existing surveillance infrastructure to be used for real-time monitoring of citizens. “There is mass installation of CCTV cameras across the country and this is also happening without any legislative basis or procedural safeguards. With CCTV camera and facial recognition technology, it would be possible for the government to track any citizen at any time. So, even if facial recognition systems are 100% accurate, which they are most definitely not at the moment, they could still facilitate mass surveillance and have a chilling effect on freedom of speech, assembly and movement of citizens” she concluded.

A former top cop, who has served the city police, also raised concerns over the dangers of using facial recognition technology in the absence of clear guidelines. “Facial image is your most sensitive biometric information. It’s one’s identity. Once this identity is stolen, it can turn the person’s life into a nightmare,” he said wishing to not be named.

“Freedom guarantees privacy. You can’t have free democratic societies without a solid foundation of privacy. How can it be violated in absence of a law or judicial authorisation?”, he added.

The former top cop also said that “use of facial recognition software by law enforcement agencies is banned in many countries across the world just because of privacy concerns,” adding that “its inaccuracy has potential to stifle the voice of dissent.”

Though Abhayanand, former Bihar DGP and educationalist, also felt that the technology could not be fully relied upon and had the potential to be misused, yet he said it helped investigators to a great extent.

“We had used the technology in the 2005 Assembly elections in Bihar to ensure that a voter does not cast vote multiple times. First, we got video recordings from various booths, then we deployed the software to identify those who indulged in the activity. Face recognition system is based on big data analytics. You draw conclusions from huge data bases. It gives false positives and two negatives as a result. The accuracy is determined by how good the software is!” he said.

Admitting that the technology “is also used wrongly and yes there is an issue of secrecy involved in it”, he said: “The law to use this technology has not so far evolved. But there is no problem with its use. It narrows down your area of investigation; however, conviction cannot take place on its basis. We have not reached to that level of accuracy. It only gives an indicator. For example, we have got an X, it will give a direction to the probe and lead to the investigation in right direction,” he added.

But the Home Minister’s admission confirms that agencies in India are actively deploying the technology and feeding it with images from voter ID cards and driving licences to identify faces in still images and possibly CCTV footage.

Cops in Delhi, Uttar Pradesh and other states have been using drones’ and CCTV footages in different ways to monitor peaceful protests against the Citizenship Amendment Act (CAA), the National Population Register (NPR) and the National Register of Indian Citizens (NRIC).

This is the reason why Congress MP, Shashi Tharoor, was critical when he said that citizens, in any progressive democracy could not be subjected to facial recognition software without safeguards in place and a legislation on it.

“It turns out that the Delhi Police goes around filming anti-government protests and then feeds the footage into the AFRS in order to extract identifiable faces of protesters,” Tharoor said at the two-day conclave titled ‘CPR Dialogues 2020: Policy Perspectives for 21st Century India’ organised by the Centre for Policy Research.

He said identifying “potential opposition protestors” does not fit into the idea of a democracy.

Get the latest reports & analysis with people's perspective on Protests, movements & deep analytical videos, discussions of the current affairs in your Telegram app. Subscribe to NewsClick's Telegram channel & get Real-Time updates on stories, as they get published on our website.